This workshop aims at promoting discussions among researchers investigating innovative tensor-based approaches to computer vision problems. Tensors have been a crucial mathematical object for several applications in computer vision and machine learning. It has been an essential ingredient in modelling latent semantic spaces, higher-order data factorization, and modelling higher-order information in visual data, and has found numerous applications in several hot topics in computer vision including, but not limited to human action recognition, object recognition, and video understanding. Moreover, tensor-based algorithms are increasingly finding significant applications in deep learning. With the rise of big data, tensors may yet prove crucial in both understanding deep architectures, as well as, may aid robust learning and generalization in inference algorithms.

TOPICS

We encourage discussions on recent advances, ongoing developments, and novel applications of multi-linear algebra, optimization, and feature representations using tensors. We are soliciting original contributions that address a wide range of theoretical and practical issues including, but not limited to:

- Tensor methods in deep learning

- Supervised learning in computer vision

- Unsupervised feature learning and multimodal representations

- Tensors in low-level feature design

- Mid-level representations with tensor methods

- Low-rank factorisation methods and denoising approaches

- Latent topic models using tensor methods

- Tensors in optimization and dictionary learning

- Tensor hardware architectures

- Advancements in multi-linear algebra

- Riemannian geometry and SPD matrices

- Applications of tensors for:

- image/video recognition

- object recognition

- scene understanding

- industrial and medical applications

- Other related topics not listed above

SCHEDULE

Below is the program of the workshop on the 26th of July, 2017. Bear in mind there may be some last minute reshuffling of the schedule due to any unforseen clashes in timetables of our speakers etc. Please check Detailed Program below for the abstracts and biographies of our invited speakers (or click on links in tables). You can also click /downlaod/ for presentations in the pdf format.

Morning Session

| Time | Invited Speaker | Title |

|---|---|---|

| 09:00 | Prof. Fatih Porikli | Welcome |

| 09:05 | Prof. Animashree Anandkumar | Role of Tensors in Deep Learning /downlaod/ |

| 09:35 | Dr. Andrzej Cichocki | Tensor Networks for Deep Learning /downlaod/ |

| 10:05 | Morning break | Kamehameha II |

| 10:30 | Dr. Nadav Cohen | Analysis and Design of Convolutional Networks via Hierarchical Tensor Decompositions /downlaod/ |

| 11:00 | Prof. René Vidal | Globally Optimal Structured Low-Rank Matrix and Tensor Factorization /downlaod/ |

| 11:30 | Dr. Ivan Oseledets | Deep Learning and Tensors for the Approximation of Multivariate Functions: Recent Results and Open Problems /downlaod/ |

| 12:00 | Lunch break | Kamehameha II |

Oral Papers

| Time | Speaker | Title |

|---|---|---|

| 13:30 | Liuqing Yang, Evangelos E. Papalexakis | Exploration of Social and Web Image Search Results Using Tensor Decomposition /download/ |

| 13:40 | Mihir Paradkar, Madeleine Udell | Graph-Regularized Generalized Low-Rank Models /download/ |

| 13:50 | Huizi Mao, Song Han, Jeff Pool, Wenshuo Li, Xingyu Liu, Yu Wang, William J. Dally | Exploring the Granularity of Sparsity in Convolutional Neural Networks /download/ |

| 14:00 | Chan-Su Lee | Human Action Recognition Using Tensor Dynamical System Modeling /download/ |

| 14:10 | Jean Kossaifi, Aran Khanna, Zachary Lipton, Tommaso Furlanello, Anima Anandkumar | Tensor Contraction Layers for Parsimonious Deep Nets /download/ |

Afternoon Session

| Time | Invited Speaker | Title |

|---|---|---|

| 14:20 | Prof. Richard Hartley | Learning Methods and Optimization on Matrix Manifolds and Matrix Lie Groups /downlaod/ |

| 14:50 | Dr. M. Alex O. Vasilescu | You've got Data, We've Got Tensors: Linear and Multilinear Tensor Models for Computer Vision, Graphics and Machine Learning /downlaod/ |

| 15:20 | Afternoon break | Kamehameha II |

| 15:50 | Otto Debals and Dr. Lieven De Lathauwer | Numerical Optimization Algorithm for Tensor-based Recognition /downlaod/ |

| 16:20 | Dr. Lior Horesh | A New Tensor Algebra - Theory and Applications /downlaod/ |

| 16:50 | Prof. Luc Florack | Redeeming the Clinical Promise of Diffusion MRI in Support of the Neurosurgical Workflow /downlaod/ |

| 17:20 | Closing remarks |

INFORMATION

The CMT site has been opened now for submission. New users can register and log in now.The call for papers in the pdf format can be accessed here.Regarding the submission guidelines, we will follow the standard CVPR rules which can be accessed here.Remebmer that registration at CVPR'17 webpage is mandatory for everyone participating in the workshop.The workshop is scheduled to be in room 311. However, please be sure to check for any possible changes on the schedule stand which is located near to the reception in the Convention Center.We are uploading presentations and photos from the workshop on-line (awaiting consents of the speakers).

PHOTOS

DETAILED PROGRAM

Below is the list of speakers (in alphabetical order) who have kindly agreed to give talks during the workshop:- Dr. Andrzej Cichocki (Brain Science Institute RIKEN and SKOLTECH)

Title: Tensor Networks for Deep Learning. Abstract: Tensor decompositions and their generalizations tensor networks are promising,

emerging tools in deep learning, since input/output data as well outputs in hidden layers

can be naturally represented and described as higher-order tensors and most operations can be

performed using optimized linear/multilinear algebra.

We discuss several applications of tensor networks in deep learning for computer vision,

especially possibility of dramatic reduction of set of parameters in state-of-the arts deep CNN,

typically, from hundreds millions to only tens thousands of parameters.

We focus on novel (Quantized) Tensor Train-Tucker (QTT-Tucker) and Hierarchical Tucker (QHT)

tensor network models for higher order tensors (tensors of order at least four or higher).

Moreover, we present tensor sketching for efficient dimensionality reduction

which avoid curse of dimensionality.

Tensor Train-Tucker and HT models will be naturally extended to MERA models,

Tree Tensor Network States ((TTNS) and PEPS/PEPO and other 2D/3D tensor networks,

with improved expressive power of deep learning in convolutional neural networks

and inspiration to generate novel architectures of deep and semi-shallow neural networks.

Furthermore, we will be show how to apply tensor networks to higher order

multiway, partially restricted Boltzmann Machine (RBM) with substantial reduction

of set of learning parameters.

Abstract: Tensor decompositions and their generalizations tensor networks are promising,

emerging tools in deep learning, since input/output data as well outputs in hidden layers

can be naturally represented and described as higher-order tensors and most operations can be

performed using optimized linear/multilinear algebra.

We discuss several applications of tensor networks in deep learning for computer vision,

especially possibility of dramatic reduction of set of parameters in state-of-the arts deep CNN,

typically, from hundreds millions to only tens thousands of parameters.

We focus on novel (Quantized) Tensor Train-Tucker (QTT-Tucker) and Hierarchical Tucker (QHT)

tensor network models for higher order tensors (tensors of order at least four or higher).

Moreover, we present tensor sketching for efficient dimensionality reduction

which avoid curse of dimensionality.

Tensor Train-Tucker and HT models will be naturally extended to MERA models,

Tree Tensor Network States ((TTNS) and PEPS/PEPO and other 2D/3D tensor networks,

with improved expressive power of deep learning in convolutional neural networks

and inspiration to generate novel architectures of deep and semi-shallow neural networks.

Furthermore, we will be show how to apply tensor networks to higher order

multiway, partially restricted Boltzmann Machine (RBM) with substantial reduction

of set of learning parameters.

Biography: Andrzej Cichocki received the M.Sc. (with honors), Ph.D. and Dr.Sc. (Habilitation) degrees, all in electrical engineering from Warsaw University of Technology (Poland). He spent several years at University Erlangen (Germany) as an Alexander-von-Humboldt Research Fellow and Guest Professor. He is currently a Senior Team Leader and Head of the laboratory for Advanced Brain Signal Processing, at RIKEN Brain Science Institute (Japan) and PI of the grant devoted to deep learning and tensor networks. He is author of more than 400 technical journal papers and 6 monographs in English (two of them translated to Chinese). He has very recently published in the Foundation and Trends in Machine Learning (FTML) two monographs devoted to tensor networks for large-scale optimization problems and supervised and unsupervised learning: "Tensor Networks for Dimensionality Reduction and Large-Scale Optimizations, Part 1 Low-Rank Tensor Decompositions and Part 2 Applications and Future Perspectives". See http://www.bsp.brain.riken.jp for more details.

References:- Cichocki, A., Lee, N., Oseledets, I., Phan, A. H., Zhao, Q., & Mandic, D. P. (2016).

Tensor networks for dimensionality reduction and large-scale optimization:

Part 1 low-rank tensor decompositions.

Foundations and Trends® in Machine Learning, 9(4-5), 249-429. - Cichocki, A., Phan, A. H., Zhao, Q., Lee, N., Oseledets, I., Sugiyama, M., & Mandic, D. P. (2017).

Tensor Networks for Dimensionality Reduction and Large-scale Optimization:

Part 2 Applications and Future Perspectives.

Foundations and Trends® in Machine Learning, 9(6), 431-673. - Cichocki, A., Mandic, D., De Lathauwer, L., Zhou, G., Zhao, Q., Caiafa, C., & Phan, H. A. (2015).

"Tensor decompositions for signal processing applications:

From two-way to multiway component analysis".

IEEE Signal Processing Magazine, 32(2), 145-163.

- Cichocki, A., Lee, N., Oseledets, I., Phan, A. H., Zhao, Q., & Mandic, D. P. (2016).

- Prof. Animashree Anandkumar (University of California Irvine, AMAZON AI)

Title: Role of Tensors in Deep Learning. Abstract: Tensor contractions are extensions of matrix products to higher dimensions. We show that tensor contractions are an effective replacement for fully connected layers in deep learning architectures. They result in significant space savings with negligible performance degradation. This is because tensor contractions retain the multi-dimensional dependencies in activation tensors while fully connected layers flatten them into matrices and lose this information. Tensor contractions present rich opportunities for hardware optimizations through extended BLAS kernels. We propose a new primitive known as StridedBatchedGEMM in Cublas 8.0 that significantly speeds up tensor contractions, and avoids explicit copy and transpositions. We also introduce tensor regression in the output layer of the networks and establish further space savings. These functionalities are available in the mxnet deep learning package.

Abstract: Tensor contractions are extensions of matrix products to higher dimensions. We show that tensor contractions are an effective replacement for fully connected layers in deep learning architectures. They result in significant space savings with negligible performance degradation. This is because tensor contractions retain the multi-dimensional dependencies in activation tensors while fully connected layers flatten them into matrices and lose this information. Tensor contractions present rich opportunities for hardware optimizations through extended BLAS kernels. We propose a new primitive known as StridedBatchedGEMM in Cublas 8.0 that significantly speeds up tensor contractions, and avoids explicit copy and transpositions. We also introduce tensor regression in the output layer of the networks and establish further space savings. These functionalities are available in the mxnet deep learning package.

Biography: Anima Anandkumar is a principal scientist at Amazon Web Services, and is currently on leave from U.C.Irvine, where she is an associate professor. Her research interests are in the areas of large-scale machine learning, non-convex optimization and high-dimensional statistics. In particular, she has been spearheading the development and analysis of tensor algorithms. She is the recipient of several awards such as the Alfred. P. Sloan Fellowship, Microsoft Faculty Fellowship, Google research award, ARO and AFOSR Young Investigator Awards, NSF CAREER Award, Early Career Excellence in Research Award at UCI, Best Thesis Award from the ACM SIGMETRICS society, IBM Fran Allen PhD fellowship, and several best paper awards. She has been featured in a number of forums such as the Quora ML session, Huffington post, Forbes, O’Reilly media, and so on. She received her B.Tech in Electrical Engineering from IIT Madras in 2004 and her PhD from Cornell University in 2009. She was a postdoctoral researcher at MIT from 2009 to 2010, an assistant professor at U.C. Irvine between 2010 and 2016, and a visiting researcher at Microsoft Research New England in 2012 and 2014. - Dr. Ivan Oseledets (Skolkovo Institute of Science and Technology (SKOLTECH))

Title: Tensor Networks and Deep Learning. Abstract: In this talk I will overview several connections between tensors and deep learning, and also describe some results from tensor approximation which can be useful in deep learning applications in vice-a-versa. I will also present some result for the application of deep learning techniques in the area where they may find another application: fast solution of problems of mathematical physics.

Abstract: In this talk I will overview several connections between tensors and deep learning, and also describe some results from tensor approximation which can be useful in deep learning applications in vice-a-versa. I will also present some result for the application of deep learning techniques in the area where they may find another application: fast solution of problems of mathematical physics.

Biography: Ivan graduated from the Moscow Institute of Physics and Technology in 2006, defended his Ph.D. thesis in 2007 and “doctor of science” dissertation in 2012. He is an Associate Professor at Skoltech since August 2013, where he is a leader of the Scientific Computing Group. He worked at the Institute of Numerical Mathematics of the Russian Academy of Sciences from 2003, from 2013 – part time. Ivan’s research focuses on the development breakthrough numerical techniques (matrix and tensor methods) for solving a broad range of high-dimensional problem and also factorisation methods for machine learning and deep learning. Ivan is the author of more than 50 published/accepted papers in high profile numerical math/computational physics journals and also in A*-rated conferences. He is also a member of the Editorial Board of the journal Advances in Computational Mathematics.? - Dr. Lieven De Lathauwer (KU Leuven)

Title: Numerical optimization algorithm for tensor-based recognition. Abstract: Face recognition is influenced by multiple parameters such as illumination, pose and expression. While matrices are limited to a single mode of variation, tensors can be used to naturally accommodate for different modes of variation. The multilinear singular value decomposition (MLSVD), for example, allows the description of each mode by a factor matrix and the representation of the modes interactions by a core coefficient tensor. In this talk, we will show that each image satisfying an MLSVD model can be expressed as a structured linear system called a Kronecker product equation (KPE). The solution of a KPE of a similar image then yields a feature vector that allows one to recognize the respective person with a high accuracy. Furthermore, using multiple images of the same person under different conditions can lead to more robust results based on a coupled KPE. It follows that our method can be used to update the database with an additional person using only a few images instead of images for each combination of parameter values.

Abstract: Face recognition is influenced by multiple parameters such as illumination, pose and expression. While matrices are limited to a single mode of variation, tensors can be used to naturally accommodate for different modes of variation. The multilinear singular value decomposition (MLSVD), for example, allows the description of each mode by a factor matrix and the representation of the modes interactions by a core coefficient tensor. In this talk, we will show that each image satisfying an MLSVD model can be expressed as a structured linear system called a Kronecker product equation (KPE). The solution of a KPE of a similar image then yields a feature vector that allows one to recognize the respective person with a high accuracy. Furthermore, using multiple images of the same person under different conditions can lead to more robust results based on a coupled KPE. It follows that our method can be used to update the database with an additional person using only a few images instead of images for each combination of parameter values.

Biography: Lieven De Lathauwer received the Ph.D. degree in applied sciences from KU Leuven, Belgium, in 1997. From 2000 to 2007 he was Research Associate of the French Centre National de la Recherche Scientifique (CNRS-ETIS). He is currently Professor at KU Leuven, affiliated with both the Group Science, Engineering and Technology of Kulak, and with the group STADIUS of the Electrical Engineering Department (ESAT). He is Associate Editor of the SIAM Journal on Matrix Analysis and Applications and he has served as Associate Editor for the IEEE Transactions on Signal Processing. He is Fellow of the IEEE. His research concerns the development of tensor tools for mathematical engineering. It centers on the following axes: (i) algebraic foundations, (ii) numerical algorithms, (iii) generic methods for signal processing, data analysis and system modelling, and (iv) specific applications. Keywords are linear and multilinear algebra, numerical algorithms, statistical signal and array processing, higher-order statistics, independent component analysis and blind source separation, harmonic retrieval, factor analysis, blind identification and equalization, big data, data fusion. Algorithms have been made available as Tensorlab (www.tensorlab.net) (with N. Vervliet, O. Debals, L. Sorber and M. Van Barel).

Notice: Mr. Otto Debals may fill in for Dr. De Lathauwer during this talk. Otto Debals obtained the M.Sc. degree in Mathematical Engineering from KU Leuven, Belgium, in 2013. He is a Ph.D. candidate affiliated with the Group Science, Engineering and Technology of Kulak, KU Leuven and with the STADIUS Center for Dynamical Systems, Signal Processing and Data Analytics of the Electrical Engineering Department (ESAT), KU Leuven. His research concerns the tensorization of matrix data, with further interests in tensor decompositions, optimization, blind signal separation and blind system identification. - Dr. Lior Horesh (IBM T.J. Watson Research Center and Columbia University)

Title: A New Tensor Algebra - Theory and Applications. Abstract: Tensors are instrumental in revealing latent correlations residing in high dimensional spaces. Despite their applicability to a broad range of applications in machine learning, speech recognition, and imaging, inconsistencies between tensor and matrix algebra have been impending their broader utility. Researchers seeking to overcome those discrepancies have introduced several different candidate extensions, each introducing unique advantages and challenges. In this tutorial, we shall review some of the common tensor algebra definitions, discuss their limitations, and introduce the new t-tensor product algebra, which permits the elegant extension of linear algebraic concepts and algorithms to tensors. Following the introduction to fundamental tensor operations, we discuss in further depth tensor decompositions and in particular the tensor SVD (t-SVD). Following discussion regarding the theoretical grounds of the decomposition, we will review numerical results demonstrating the promise of the formulation for face recognition tasks as well as for model reduction applications.

Abstract: Tensors are instrumental in revealing latent correlations residing in high dimensional spaces. Despite their applicability to a broad range of applications in machine learning, speech recognition, and imaging, inconsistencies between tensor and matrix algebra have been impending their broader utility. Researchers seeking to overcome those discrepancies have introduced several different candidate extensions, each introducing unique advantages and challenges. In this tutorial, we shall review some of the common tensor algebra definitions, discuss their limitations, and introduce the new t-tensor product algebra, which permits the elegant extension of linear algebraic concepts and algorithms to tensors. Following the introduction to fundamental tensor operations, we discuss in further depth tensor decompositions and in particular the tensor SVD (t-SVD). Following discussion regarding the theoretical grounds of the decomposition, we will review numerical results demonstrating the promise of the formulation for face recognition tasks as well as for model reduction applications.

Biography: Dr. Lior Horesh is a Research Staff Member and a Master Inventor at the Quantum Computing Algorithms group of IBM TJ Watson Research Center. In addition, Dr. Horesh serves as an Adjunct Associate Professor at the Computer Science Department of Columbia University. Prior to his current role, Dr. Horesh was as a post-doctoral associate at the Department of Mathematics and Computer Sciences of Emory University, were he studied various aspects of inverse problems and numerical simulation. Horesh holds a PhD from the University College London, UK where he worked on the development of reconstruction algorithms for electromagnetic and electro-optic imaging. Horesh has co-authored over 60 peer-reviewed publications and over 30 patent disclosures, given dozen of invited talks at top rated conferences and workshops, including a plenary talk at the SIAM Imaging Science Conference. His recent activity focuses on bridging the gap between first principle and data-driven modeling through meta-level learning and optimal design. His current research addresses theoretical and applied aspects of numerical tensor algebra, with applications to large-scale numerical simulation, inverse problems and machine learning. - Prof. Luc Florack (Eindhoven University of Technology)

Title: Redeeming the Clinical Promise of Diffusion MRI in Support of the Neurosurgical Workflow. Abstract: The brain is in vogue. The reason is twofold: scientific curiosity and sheer health-economic necessity. To illustrate the latter, in 2012 a study group commissioned by the European Brain Council (www.braincouncil.eu) investigated the economic impact of brain diseases in Europe over the year 2010, estimating costs at a staggering 800 billion euro. Due to ageing and modern lifestyle, odds are that these costs will continue to increase for years to come. Insight in (generic as well as patient specific) structure and function of the brain is a condition for sustainable healthcare.

There are many levels of aggregation in brain research, employing different disciplines and viewpoints, and focusing on different scientific aspects or application targets. In my presentation I will focus on neural fiber pathways in the brain from an empirical point of view, based on diffusion magnetic resonance imaging, for the purpose of neurosurgery. I will highlight the (potential) role of tensor calculus and differential geometry in this endeavor, and put everything into the holistic perspective of a `pipeline' that links up elements ranging from fundamental quantum physics (notably nuclear magnetic resonance in hydrogen spin systems) to practical challenges in the daily workflow of the neurosurgeon. Clinical skepsis forces us to pay attention to the `weakest' link in the pipeline. I will indicate how this may be ameliorated in practice, and show some promising results obtained from preclinical feasibility studies on risk assessment and planning of temporal lobe resection therapy in epilepsy patients (work done in collaboration with Kempenhaege, a leading centre of expertise in The Netherlands, offering diagnosis and treatment to patients suffering from a complex form of epilepsy, a sleeping disorder and/or neurological learning disabilities).

Abstract: The brain is in vogue. The reason is twofold: scientific curiosity and sheer health-economic necessity. To illustrate the latter, in 2012 a study group commissioned by the European Brain Council (www.braincouncil.eu) investigated the economic impact of brain diseases in Europe over the year 2010, estimating costs at a staggering 800 billion euro. Due to ageing and modern lifestyle, odds are that these costs will continue to increase for years to come. Insight in (generic as well as patient specific) structure and function of the brain is a condition for sustainable healthcare.

There are many levels of aggregation in brain research, employing different disciplines and viewpoints, and focusing on different scientific aspects or application targets. In my presentation I will focus on neural fiber pathways in the brain from an empirical point of view, based on diffusion magnetic resonance imaging, for the purpose of neurosurgery. I will highlight the (potential) role of tensor calculus and differential geometry in this endeavor, and put everything into the holistic perspective of a `pipeline' that links up elements ranging from fundamental quantum physics (notably nuclear magnetic resonance in hydrogen spin systems) to practical challenges in the daily workflow of the neurosurgeon. Clinical skepsis forces us to pay attention to the `weakest' link in the pipeline. I will indicate how this may be ameliorated in practice, and show some promising results obtained from preclinical feasibility studies on risk assessment and planning of temporal lobe resection therapy in epilepsy patients (work done in collaboration with Kempenhaege, a leading centre of expertise in The Netherlands, offering diagnosis and treatment to patients suffering from a complex form of epilepsy, a sleeping disorder and/or neurological learning disabilities).

Biography: Luc Florack received his MSc degree in theoretical physics in 1989 and his PhD degree in 1993 (cum laude) at Utrecht University, The Netherlands, under the supervision of professor Max Viergever and professor Jan Koenderink. He has received personal research grants from the European Union (ERCIM and HCM, 1994-1995), from the Danish Research Council (1996) as well as from the national science foundation NWO (2000-2005), and has worked at several research institutes in Europe as a postdoctoral researcher. Since 2001 he is affiliated with Eindhoven University of Technology (TU/e) as a tenured staff member, where he is now professor of Mathematical Image Analysis at the Department of Mathematics and Computer Science. His research group is part of the recently established Data Science Center Eindhoven (DSC/e). His research covers multiscale and differential geometric representations of images and their applications, with a focus on complex magnetic resonance images for cardiological and neurological applications. - Dr. M. Alex O. Vasilescu (UCLA Computer Graphics and Vision Lab)

Title: You've got Data, We've Got Tensors: Linear and Multilinear Tensor Models for Computer Vision, Graphics and Machine Learning. Abstract: The talk will be addressing Face Recognition, Human Motion Recogntion and Synthesis using Multilinear PCA, Multilinear ICA and Multilinear Projection.

Abstract: The talk will be addressing Face Recognition, Human Motion Recogntion and Synthesis using Multilinear PCA, Multilinear ICA and Multilinear Projection.

Biography: M. Alex O. Vasilescu received her education at MIT and the University of Toronto. She was a research scientist at the MIT Media Lab from 2005–07 and at New York University’s Courant Insitute of Mathematical Sciences from 2001–05. She has pioneered the developement of multilinear (tensor) algebra for computer vision, computer graphics and machine learning. She has published papers in computer vision and computer graphics, particularly in the areas of face recognition, human motion analysis/synthesis, image-based rendering, and physics-based modeling (deformable models). She has given several invited talks and keynote addresses about her work and has several patents and patents pending. Her face recognition research, known as TensorFaces, has been funded by the TSWG, the Department of Defense’s Combating Terrorism Support Program. Her work was featured on the cover of Computer World, and in articles in the New York Times, Washington Times, etc. MIT’s Technology Review Magazine named her to their TR100 List of Top 100 Young Innovators. M. Alex O. Vasilescu research on perceptual signatures distilled from biometric data is fundamental to human-centric technologies such as the burgeoning security industry, and when directed at ourselves, it can enhance our self-awareness, understanding, and health, and they can facilitate our interaction with each other and computers. - Dr. Nadav Cohen (Hebrew University of Jerusalem)

Title: Expressive Efficiency and Inductive Bias of Convolutional Networks:

Analysis and Design via Hierarchical Tensor Decompositions. Abstract: The driving force behind convolutional networks -- the most successful deep learning architecture to date, is their expressive power. Despite its wide acceptance and vast empirical evidence, formal analyses supporting this belief are scarce. The primary notions for formally reasoning about expressiveness are efficiency and inductive bias. Expressive efficiency refers to the ability of a network architecture to realize functions that require an alternative architecture to be much larger. Inductive bias refers to the prioritization of some functions over others given prior knowledge regarding a task at hand. Through an equivalence to hierarchical tensor decompositions, we study the expressive efficiency and inductive bias of various convolutional network architectural features. Our results shed light on the demonstrated effectiveness of convolutional networks, and in addition, provide new tools for network design.

The talk is based on a series of works from COLT'16, ICML'16, CVPR'16 and ICLR'17 (as well as several new pre-prints), with collaborators Or Sharir, Yoav Levine, Ronen Tamari, David Yakira and Amnon Shashua.

Abstract: The driving force behind convolutional networks -- the most successful deep learning architecture to date, is their expressive power. Despite its wide acceptance and vast empirical evidence, formal analyses supporting this belief are scarce. The primary notions for formally reasoning about expressiveness are efficiency and inductive bias. Expressive efficiency refers to the ability of a network architecture to realize functions that require an alternative architecture to be much larger. Inductive bias refers to the prioritization of some functions over others given prior knowledge regarding a task at hand. Through an equivalence to hierarchical tensor decompositions, we study the expressive efficiency and inductive bias of various convolutional network architectural features. Our results shed light on the demonstrated effectiveness of convolutional networks, and in addition, provide new tools for network design.

The talk is based on a series of works from COLT'16, ICML'16, CVPR'16 and ICLR'17 (as well as several new pre-prints), with collaborators Or Sharir, Yoav Levine, Ronen Tamari, David Yakira and Amnon Shashua.

Biography: Nadav Cohen has recently concluded his PhD at the School of Computer Science and Engineering in the Hebrew University of Jerusalem, under the supervision of Prof. Amnon Shashua. His research focuses on the theoretical and algorithmic foundations of deep learning. In particular, he is interested in the application of tensor analysis for the study of convolutional network architectures. Nadav holds a BSc in electrical engineering and a BSc in mathematics (both summa cum laude), conducted under the Technion Excellence Program for distinguished undergraduates. For his research in graduate studies, he received a number of awards, including the Google Doctoral Fellowship in Machine Learning, the Rothschild Postdoctoral Fellowship, and the Zuckerman Postdoctoral Fellowship. Starting fall 2017, Nadav will be a postdoctoral member at the Institute for Advanced Study (IAS) in Princeton. - Prof. Renée Vidal (Johns Hopkins University)

Title: Globally Optimal Structured Low-Rank Matrix and Tensor Factorization. Abstract: Matrix, tensor, and other factorization techniques are used in many applications and have enjoyed significant empirical success in many fields. However, common to a vast majority of these problems is the significant disadvantage that the associated optimization problems are typically non-convex due to a multilinear form or other convexity destroying transformation. Building on ideas from convex relaxations of matrix factorizations, in this talk I will present a very general framework which allows for the analysis of a wide range of non-convex factorization problems – including matrix factorization, tensor factorization, and deep neural network training formulations. In particular, I will present sufficient conditions under which a local minimum of the non-convex optimization problem is a global minimum and show that if the size of the factorized variables is large enough then from any initialization it is possible to find a global minimizer using a local descent algorithm.

Abstract: Matrix, tensor, and other factorization techniques are used in many applications and have enjoyed significant empirical success in many fields. However, common to a vast majority of these problems is the significant disadvantage that the associated optimization problems are typically non-convex due to a multilinear form or other convexity destroying transformation. Building on ideas from convex relaxations of matrix factorizations, in this talk I will present a very general framework which allows for the analysis of a wide range of non-convex factorization problems – including matrix factorization, tensor factorization, and deep neural network training formulations. In particular, I will present sufficient conditions under which a local minimum of the non-convex optimization problem is a global minimum and show that if the size of the factorized variables is large enough then from any initialization it is possible to find a global minimizer using a local descent algorithm.

Biography: Professor Vidal received his B.S. degree in Electrical Engineering from the Pontificia Universidad Católica de Chile in 1997 and his M.S. and Ph.D. degrees in Electrical Engineering and Computer Sciences from the University of California at Berkeley in 2000 and 2003, respectively. In 2004 he joined the Johns Hopkins University, where he is currently a Professor in the Center for Imaging Science and the Department of Biomedical Engineering. Dr. Vidal is co-author of the book “Generalized Principal Component Analysis” (2016), co-editor of the book “Dynamical Vision” (2006), and co-authored of more than 200 articles in machine learning, computer vision, biomedical image analysis, hybrid systems, robotics and signal processing. Dr. Vidal is Associate Editor of the IEEE Transactions on Pattern Analysis and Machine Intelligence, the SIAM Journal on Imaging Sciences, Computer Vision and Image Understanding, and Medical Image Analysis. He has been Program Chair for ICCV 2015 and CVPR 2014, and Area Chair for all major conferences in machine learning, computer vision, and medical image analysis. Dr. Vidal has received many awards for his work including the 2012 J.K. Aggarwal Prize, the 2009 ONR Young Investigator Award, the 2009 Sloan Research Fellowship, the 2005 NFS CAREER Award, and best paper awards at in computer vision (ICCV-3DRR 2013, PSIVT 2013, ECCV 2004), controls (CDC 2012, CDC 2011) and medical robotics (MICCAI 2012). Dr. Vidal was elected fellow of the IEEE in 2014 and fellow of the IAPR in 2016. - Prof. Richard Hartley (Australian National University)

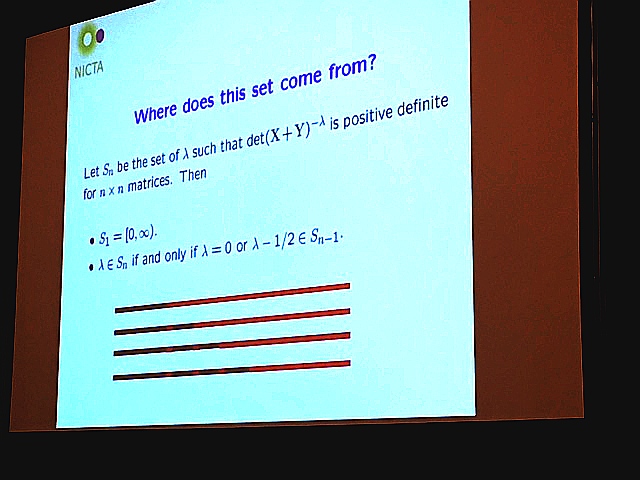

Title: Learning Methods and Optimization on Matrix Manifolds and Matrix Lie Groups. Abstract:

In this talk I will discuss some results involving learning on matrix manifolds and Lie groups, such at the cone of positive definite matrices, Grassman and Stiefel manifolds, SO3 and the Essential manifold. Some results will be presented about the existence of kernels on such manifolds. An interesting phenomenon concerns the positive-definiteness of radial-basis function kernels on the cone of positive-definite manifolds furnished with the Stein metric, in which the standard RBF kernel is positive-definite for some scales but not others.

The focus of the talk with be the mathematical theory behind the results. Applications of these results to practical problems are discussed in cited literature.

Abstract:

In this talk I will discuss some results involving learning on matrix manifolds and Lie groups, such at the cone of positive definite matrices, Grassman and Stiefel manifolds, SO3 and the Essential manifold. Some results will be presented about the existence of kernels on such manifolds. An interesting phenomenon concerns the positive-definiteness of radial-basis function kernels on the cone of positive-definite manifolds furnished with the Stein metric, in which the standard RBF kernel is positive-definite for some scales but not others.

The focus of the talk with be the mathematical theory behind the results. Applications of these results to practical problems are discussed in cited literature.

Biography: Richard Hartley is a member of the computer vision group in the Research School of Engineering, at the Australian National University, where he has been since January, 2001. He is a joint leader of the Computer Vision group in NICTA, a government funded research laboratory. Dr. Hartley worked at the General Electric Research and Development Center from 1985 to 2001, working first in VLSI design, and later in computer vision. He became involved with Image Understanding and Scene Reconstruction working with GE's Simulation and Control Systems Division. In 1991, he began an extended research effort in the area of applying projective geometry techniques to reconstruction using calibrated and semi-calibrated cameras. This research direction was one of the dominant themes in computer vision research throughout the 1990s. In 2000, he co-authored (with Andrew Zisserman) a book on Multiview Geometry in Computer Vision, summarizing the previous decade’s research in this area.

IMPORTANT DATES

- Submission Deadline:

1st of April, 201720th Of April, 2017 (the deadline is extended)closed - Reviews submitted in CMT:

5th of May, 201711th of May(reviews are now available, please also read metareviews) - Decision to Authors:

8-10th of May, 201711th of May(decisions are now available) - Camera Ready:

15th of May, 201717th of May(camera ready submission is now closed) - TMCV Workshop:

26th of July, 2017

ACCEPTED PAPERS

- Exploration of Social and Web Image Search Results Using Tensor Decomposition.

Liuqing Yang, Evangelos E. Papalexakis. - Graph-Regularized Generalized Low-Rank Models.

Mihir Paradkar, Madeleine Udell. - Exploring the Granularity of Sparsity in Convolutional Neural Networks.

Huizi Mao, Song Han, Jeff Pool, Wenshuo Li, Xingyu Liu, Yu Wang, William J. Dally. - Human Action Recognition Using Tensor Dynamical System Modeling.

Chan-Su Lee. - Tensor Contraction Layers for Parsimonious Deep Nets.

Jean Kossaifi, Aran Khanna, Zachary Lipton, Tommaso Furlanello, Anima Anandkumar.

TECHNICAL PROGRAM

COMMITTEE

- We would like to thank our reviewers for a swift work and valuable comments: Arash Shahriari, Chong You, Eric Kim, Forough Arabshahi, Manolis Tsakiris, Masoud Faraki, Mathieu Salzmann, Mehrtash Harandi, Nadav Cohen, Or Sharir, Otto Debals, Qi (Rose) Yu, Rafael Ballester-Ripoll, Renato Pajarola, Rong Ge, Ruiping Wang, Toni Ivanov, Yan Liu, Yang Shi.

CITATION

If you wish to cite any topics raised during the workshop, refer to specific papers of our speakers. Additionally, you are welcome to cite the workshop itself:

@misc{tmcv_workshop_2017,

title = {Tensor Methods in Computer Vision},

author = {P. Koniusz and A. Cherian and F. Porikli},

howpublished = {CVPR Workshop, \url{http://users.cecs.anu.edu.au/~koniusz/tensors-cvpr17}},

note = {Accessed: 15-08-2017},

year = {2017},

}

ORGANISERS

- Dr. Piotr Koniusz (Data61/CSIRO and the Australian National University)

- Dr. Anoop Cherian (Australian National University)

- Prof. Fatih Porikli (Australian National University)